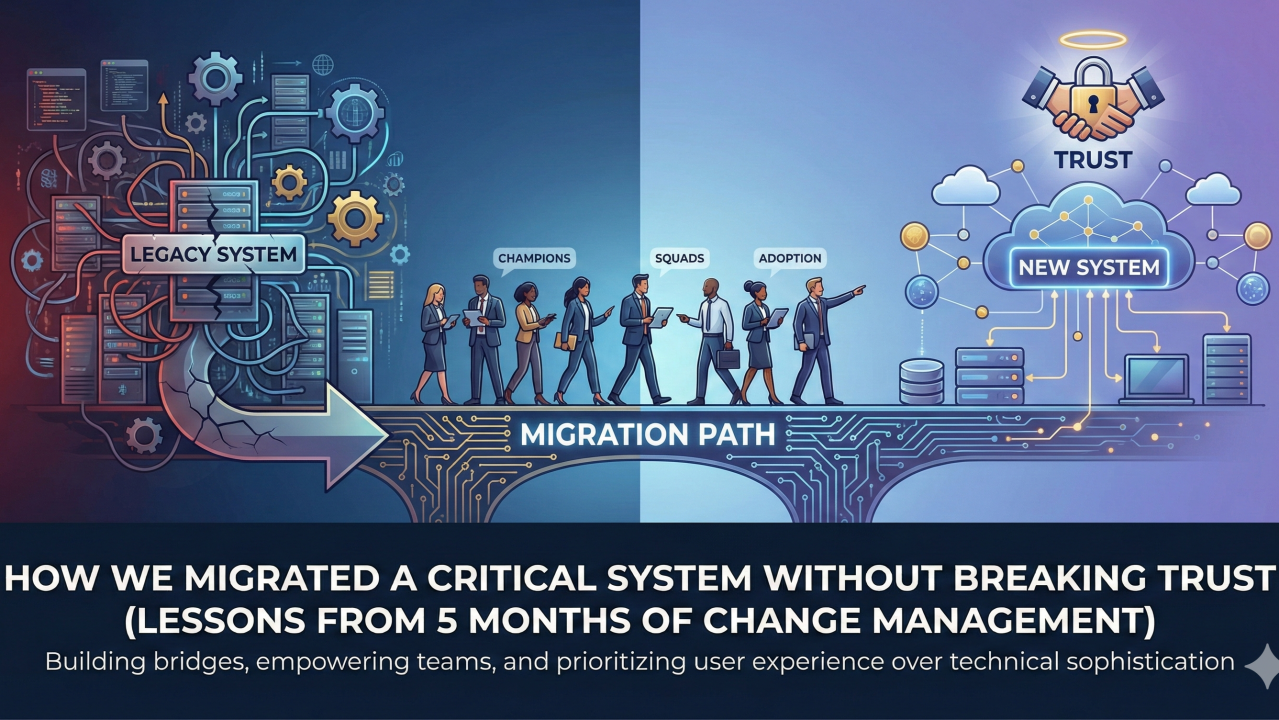

How We Migrated a Critical System Without Breaking Trust

Picture this: it’s 2 AM. A data pipeline just broke. Stakeholders are already sending messages. Your team is scrambling to diagnose the issue using muscle memory they’ve built over months — scanning logs, checking dashboards, following patterns they know by heart.

Now imagine telling them: “By the way, we’re changing how all of this works. New interface, new debugging flow, new mental models. Good luck.”

That was our challenge. We needed to migrate our data orchestration system while it was running in production, supporting critical pipelines that couldn’t afford extended downtime. No tests in the legacy system. Teams depending on it in high-pressure situations. And a complete rewrite that would change how they saw and interacted with failures.

Here’s what we learned.

The Mistake: Moving Everyone at Once

Our first instinct was logical: multiple squads sharing the same codebase, so let’s upgrade everyone together. One big push, everyone moves to the new system, done.

This created immediate anxiety. Some squads had more critical pipelines, more business impact, different release cycles, different comfort levels with change. Forcing them all to move in lockstep felt like we were ignoring their reality.

We had to pivot.

Isolate Squads, Build Champions

Instead of upgrading everyone at once, we let squads move individually. This did two things: it reduced perceived risk (“we’re not betting the farm on this”), and it gave us early wins we could point to.

But we needed someone to go first.

There are always people willing to change — early adopters who are frustrated with the current system or just excited by new things. We found them and invested heavily in these relationships. Not just “here’s the new system, try it out” — real partnership: listening sessions, beta access, tight feedback loops.

These people became our champions. They ran office hours for other teams. They gave demos. They tested new features and helped us refine the design. Their enthusiasm was contagious, and more importantly, it was credible — these were peers, not platform engineers telling everyone everything would be fine.

What Actually Worked

Once we had champions, a few specific tactics drove broader adoption:

Market using pain points. We didn’t lead with “look at all these cool new features.” We led with “remember that thing that drives you crazy in the old system? We fixed it.” Problems first, solutions second.

Strategic feature allocation. New features and improvements only went to the new system. The old system entered maintenance mode — critical bugs only. This created urgency without being heavy-handed. People could see where the momentum was.

Show concrete success stories. As each squad moved, we documented their experience and shared it with teams still on the fence. Social proof matters.

Don’t let environments diverge too long. One mistake we made: keeping squads in QA too long before moving them to production. This created frustration — they were living in the new world in QA but context-switching back to the old world in production. If I did this again, I’d invest in automated testing upfront to move squads through QA and into production faster.

The Rollback That Taught Us Everything

About midway through the rollout, we hit a bug in the new system. One squad needed to roll back.

The bug itself? A one-line fix. Took maybe 30 minutes to identify and resolve.

The cost? Three weeks.

Not three weeks to fix the code — three weeks to rebuild momentum and trust. Teams who were about to migrate suddenly had doubts. Champions had to answer harder questions.

That rollback taught me more than any success: fix forward whenever possible. Rollbacks aren’t just technical — they’re psychological. They signal “this isn’t ready” and people remember that feeling longer than they remember the original bug.

From that point on, our bias was always toward fixing issues in place rather than reverting.

Getting the Last Squads Over the Line

Eventually we had a few squads that just weren’t prioritising the migration. Not hostile — just busy, risk-averse, or comfortable with the status quo.

For two of ten squads — the least technical teams — we did something platform engineers often resist: we migrated their code for them. Converted their pipelines, tested them, handed back a working system in the new world.

Scalable? No. But it removed their biggest barrier (fear of breaking something) and built trust for future changes.

For the remaining holdouts, we drew a line. Concrete success stories, a timeline for when the old system would sunset, and a clear message that the transition was happening — but we’d support them through it.

The Ratio

The code rewrite took about a month. The rollout took five months.

If you’re planning something similar, budget for that ratio. Platform changes take longer than you think, and most of that time isn’t spent writing code — it’s spent listening, communicating, building trust, addressing concerns, and sometimes just waiting for the right moment to push.

Platform success is based on user experience, not technical sophistication.

If the users don’t trust the new system, it doesn’t matter how good the code is. Build champions, fix forward, and show up for the teams who need the most help. The technical work is the easy part.